Using these decorators makes the code more intuitive and make easier to task () def extract_from_api (): # TODO: Fetch the data from an API and store it where it can be This is done by encapsulating in decorators all the boilerplate needed in the past. Taskflow simplifies how a DAG and its tasks are declared. And this was an example imagine how much of this code there would be in a real-life pipeline! The Taskflow way, DAG definition using Taskflow Without Taskflow, we ended up writing a lot of repetitive code. Pass with DAG ( 'awesome_etl_v1' ) as dag : ext1 = PythonOperator ( task_id = 'extract_from_api', python_callable = extract_from_api, provide_context = True, ) ext2 = PythonOperator ( task_id = 'extract_from_db', python_callable = extract_from_api, provide_context = True, ) trn = PythonOperator ( task_id = 'transform', python_callable = extract_from_api, provide_context = True, ) load = PythonOperator ( task_id = 'load', python_callable = extract_from_api, provide_context = True, ) << trn << load Pass def load ( ** kwargs ): # TODO: Read the transformed data and save it where it can be analyzed later # then store it where it can be read later

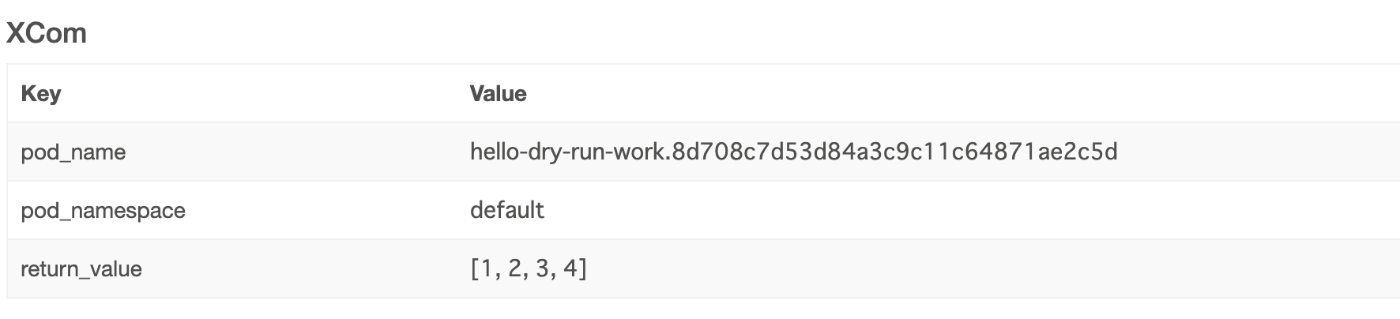

Pass def transform ( ** kwargs ): # TODO: Read the data extracted, transform it to fit our needs and Pass def extract_from_db ( ** kwargs ): # TODO: Fetch the data from our database and store it where it can Traditionally, we’d write our DAGs following a structure similar to the one shown next:ĭef extract_from_api ( ** kwargs ): # TODO: Fetch the data from an API and store it where it can be The old way, DAG Definition before Taskflowįor our ETL process, we need to implement some tasks to get the data out of its source, transform it, and load it into the persistent storage. You can find the complete code on GitHub following this link. Finally, we’d store the transformed data in persistent storage to be consumed later by the analysis team. We want to process the information we’ve recovered from those two sources, unify the data’s schema, and keep relevant information for us. The data comes from two different sources: a third-party CRM via a RESTfull API and an owned relational database. Imagine you need to write an ETL process with Airflow to process sales information about our customers. It seems pretty straightforward, right? But it also comes with several tools to make our life easier, primarily if most of your DAGs are written as Python tasks. The Taskflow API is an abstraction built on top of XComs that allows developers to send messages between tasks in a DAG (Directed Acyclic Graph). This blog post will show why you should too.

After considering whether we should adopt this new feature, we finally decided to make Taskflow a part of our daily work. And we especially had a good time working with its new Taskflow API. Since then we’ve had the opportunity to experience most of them. It would be great to see Airflow or Apache separate Airflow-esque task dependency into its own microservice, as it could be expanded to provide dependency management across all of your systems, not just Airflow.Airflow introduced several changes in its second version a year ago. Tasks with dependencies on this legacy replication service couldn’t use Task Sensors to check if their data is ready. While external services can GET Task Instances from Airflow, they unfortunately can’t POST them. However, what if the upstream dependency is outside of Airflow? For example, perhaps your company has a legacy service for replicating tables from microservices into a central analytics database, and you don’t plan on migrating it to Airflow.

You could use this to ensure your Dashboards and Reports wait to run until the tables they query are ready. Even better, the Task Dependency Graph can be extended to downstream dependencies outside of Airflow! Airflow provides an experimental REST API, which other applications can use to check the status of tasks. The External Task Sensor is an obvious win from a data integrity perspective. Sql="SELECT * FROM table WHERE created_at_month = '`", # Run SQL in BigQuery and export results to a tableįrom _operator import BigQueryOperatorĭestination_dataset_table='',

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed